The Short Skirt/Long Jacket Problem

Passing along information without understanding it.

This has been percolating for a while, and I’ve found it hard to write about because it’s a subtle thing that is easier to feel than to form concrete words around. And that is passing along information without really interacting with that information.

Sometimes you need a bit of info, and you ask the person that can get the answer for you. Sometimes all you need is copy/paste. A fact. A figure. A yes/no. But it can also be helpful and ultimately more efficient if the person between the info and you understands the information that they’re passing along.

How so?

- They can question. Did the information actually answer the question? Is it unclear? Does the answer elicit another question? Is it unclear, but unlikely there will be clarity (this happened recently where someone was unclear about an answer the second time we asked, and thus we surrendered).

- They can change the info for the better. They might be able to edit the info based on something they understand about it. Sometimes the intermediary can curate the information because they know the context in which you’re asking it. Or some other contextual tidbit.

- Does it even make sense? Can’t tell you how many times I’ve gotten an answer or some feedback that just didn’t make any sense. The person in the middle can catch this.

And what’s the benefit?

This makes the information more useful, clear, specific, etc. for everybody involved.

When the information doesn’t get all the way to the end before it has to loop back for clarity, this saves time.

When someone passes along information without understanding or thinking about it, it just kicks a can down the road. This is something I’ve seen happen in larger business structures but it also makes something I’ve noticed about AI make a lot more sense.

The Short Skirt/Long Jacket Problem

I’ve seen several instances of people using AI, and it will come up with a completely coherent, factual response. When you first read it, you say, yes this is great. However, once you sit down with it to think about the implications, you realize it’s actually not useful at all. It’s a strange feeling disconnect. And I think the reasons are the same – because these LLMs are also passing information through to you without actually understanding it. Coherence is not enough.

There’s a professional sheen on the outside (long jacket) but not enough material underneath (short skirt). In typical fashion, I wrote all this before I bothered to search it, and turns out this is called “coherent nonsense” or the “coherence trap.” Here is what popped up in my search:

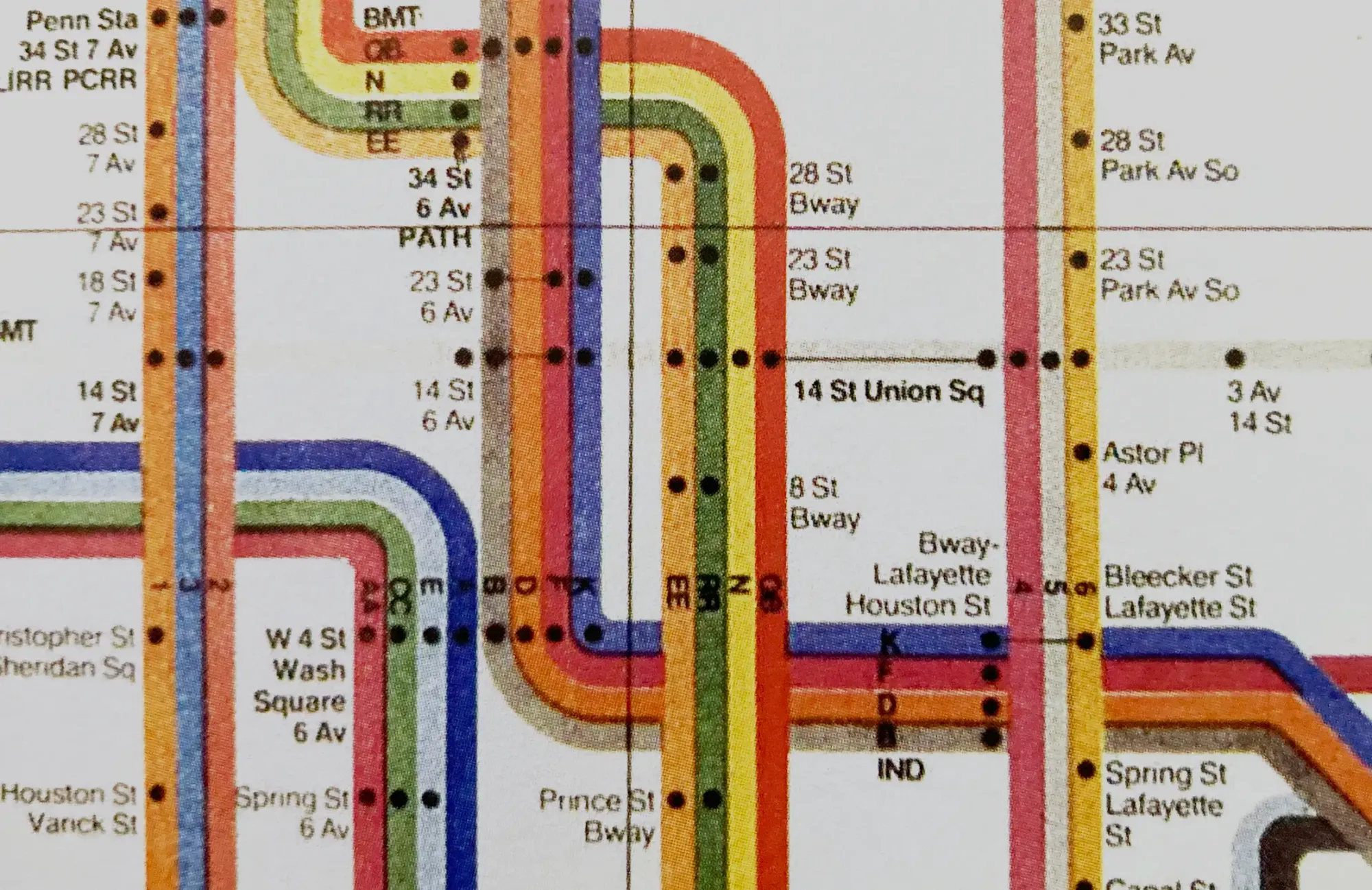

A great example from the MIT article quoted below is LLM-generated driving directions. The LLMs learn from language-based data. Therefore, when street closures or detours happen, it can break down. It only understands the structure from how people talk about it rather than an underlying map. Note: "transformer" = a type of generative AI model.

“For example, they found that a transformer can predict valid moves in a game of Connect 4 nearly every time without understanding any of the rules.” – MIT

I'll leave you with this classic, which is not illustrative of the problem, but immediately popped in my head as I was thinking about this.